In sensor-driven interactive installations, the most fragile part is often the IO layer, the input and output glue between sensors, content, and hardware. It has to accept different formats, keep timing stable, and stay debuggable while artists and engineers keep changing things on site.

When that layer works properly, the technology fades into the background and the space feels responsive and intuitive.

Treating TouchDesigner as an IO hub makes it easier to connect engines and devices that were not designed to talk to each other directly. A single project can cover several roles at once in an interactive system integration workflow:

- Ingest pre-rendered and pre-animated material, from audio stems and texture sequences to 3D meshes, and control how they are mapped, layered, and timed in real-time.

- Capture live streams from engines such as Unity or Unreal Engine for monitoring, routing, compositing, or feeding those signals back into other parts of the system.

- Play back video and sound assets in sync with external inputs such as biometrics, LiDAR, cameras, or show control timelines.

- Run or orchestrate external processes such as a Stable Diffusion pipeline, treating generated frames or textures as additional media sources in the real-time graph.

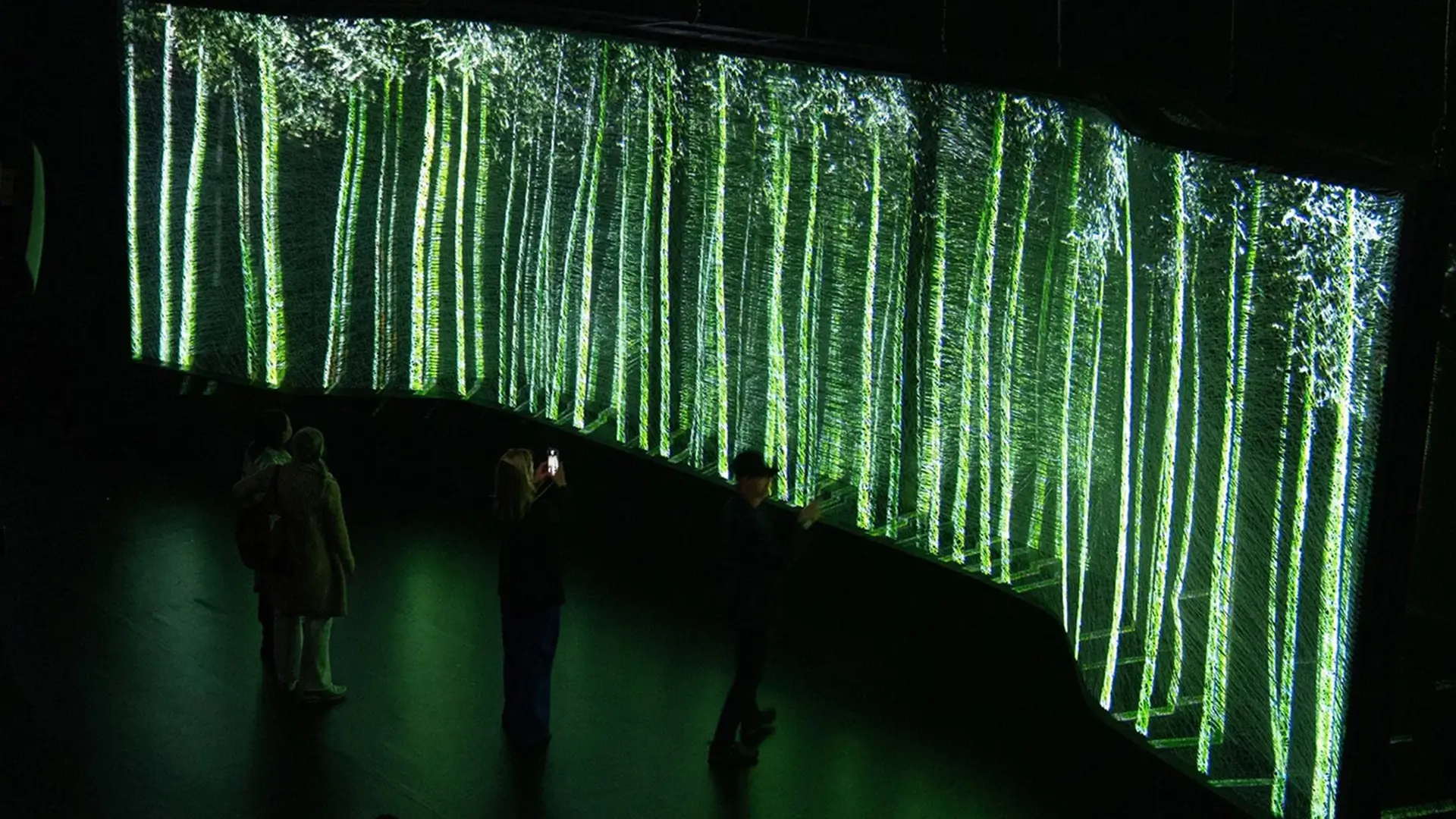

The same IO pattern appears in our work, such as the Nespresso New York interactive video wall, where computer vision and LiDAR feed into a shared control layer and drive large-scale responsive visuals. The mix of sensors changes, but the structure of sensor-driven interactive installations stays similar when TouchDesigner holds the centre.

For technical directors, this makes TouchDesigner a practical base for interactive system integration. It keeps sensor streams, audio, textures, and 3D meshes aligned behind one timing model, supports a real-time media pipeline with TouchDesigner and Unity / Unreal Engine, and provides a single place where routing, logging, and failures can be inspected.

Related capability: Real-time interactive systems.

Related projects: Lexus Milan Design Week interactive installation and Nespresso New York interactive video wall.