TouchDesigner development is most useful when one environment needs to handle sensing, protocol logic, media playback, real-time rendering, and control together. The focus here is practical implementation: patch structure, timing, state logic, and robust operation on site.

The aim is a flexible operational layer where sensor data, content behaviour, real-time graphics, external assets, and output logic can be built, tested, and maintained together once the system is installed in public space.

One layer across the full chain

A responsive environment depends on clean joins between sensing, playback, lighting, and control. If sensing lives in one application, playback in another, and lighting in a third, timing drifts and behaviour becomes harder to trust. TouchDesigner makes it possible to build one integration layer across all of those elements. Whether the project involves LiDAR, depth cameras, computer vision, DMX, Art-Net, sACN, OSC, NDI, Spout, Unreal Engine, texture sequences, or external media servers, the goal is a single readable system where every input, rule, and output state is visible together.

That visibility is what separates a well-structured integration from a collection of connected parts. In a public installation, the focus is on reliable opening, rapid diagnosis, and predictable behaviour when an input drops or a component restarts. The service covers technical structuring, system logic, and patch assembly at that level.

Scope of development

The work can include sensor strategy and placement, real-time content creation inside TouchDesigner, preparation and integration of external 3D assets or rendered sequences, protocol bridging between lighting, AV, and control systems, state machines for experience sequencing, and the logic that translates raw sensor input into outputs the room can act on. The goal is systems that are expressive and maintainable, structured clearly enough for downstream technical teams to support after handover.

Recent work

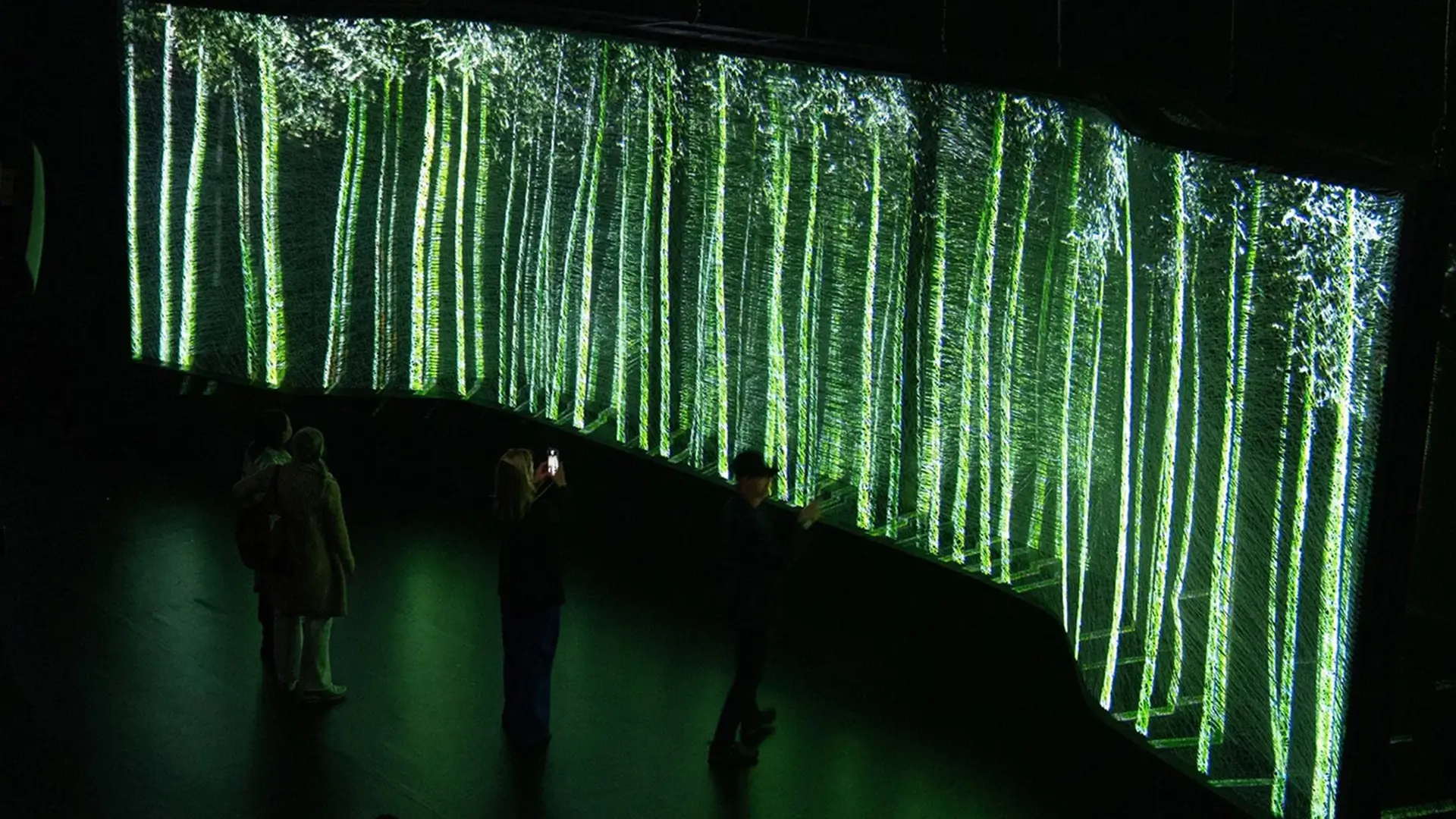

At Lexus Milan Design Week, TouchDesigner sat inside a larger immersive system, connecting real-time visual behaviour to biometric input and projection logic across a translucent spatial surface. The installation linked colour, light, and sound into one responsive environment driven by visitor presence.

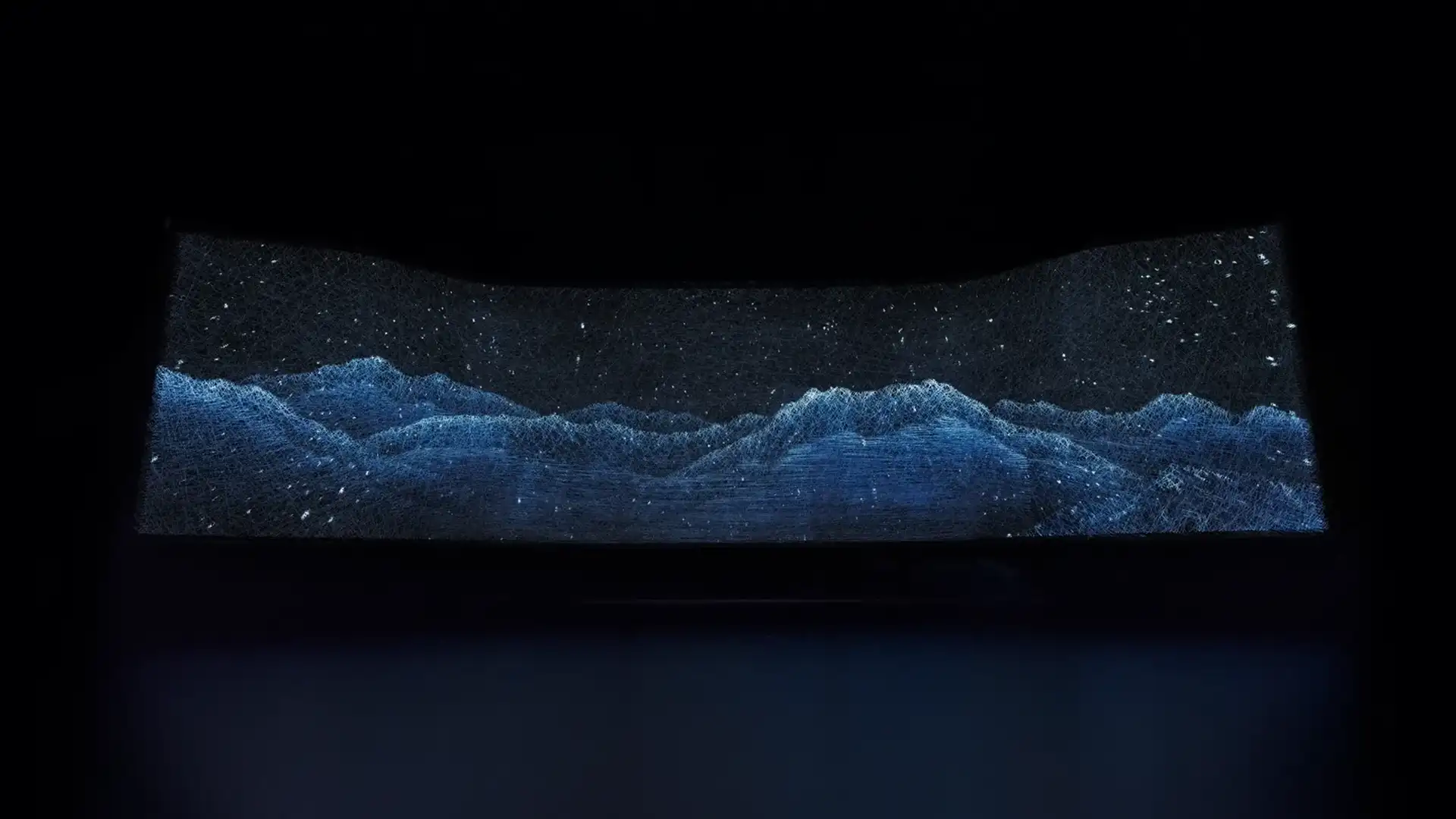

At Nespresso New York, computer vision and sensing data fed a generative fluid environment that turned visitor movement into a slow visual language across the flagship store's video wall.

The sensors change from project to project. The role of the TouchDesigner layer remains consistent: hold the behaviour together and keep the system readable so the experience stays stable enough to run in public conditions.